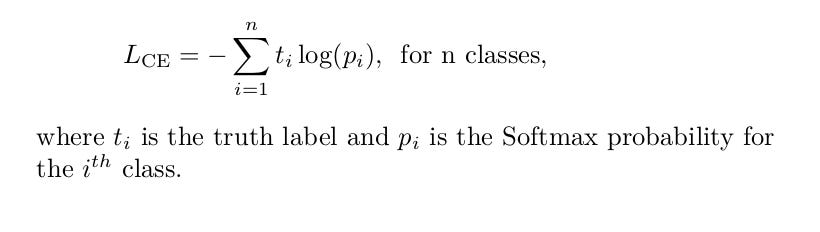

This saves memory when the label is sparse (the number of classes is very large). Will become: y_true_one_hot = Improvements Then we can represent y_true using one-hot embeddings.įor example, y_true with 3 samples, each belongs to class 2, 0, and 2. When we have a single-label, multi-class classification problem, the labels are mutually exclusive for each data, meaning each data entry can only belong to one class. The only difference between sparse categorical cross entropy and categorical cross entropy is the format of true labels. ] Sparse Categorical Cross Entropy Definition Y_pred is in the format of predicted probabilities of each class: y_pred = , Where len(y_true) = num_samples, len(y_true) = num_classes For example, for a 3 class classification problem: y_true = , The difference is both variants covers a subset of use cases and the implementation can be different to speed up the calculation.įollowing is the pseudo code of implementation in MXNet backend following the equation: loss = - mx.sym.sum(y_true * mx.sym.log(y_pred), axis=axis)īoth the shape of y_true and y_pred is in (num_samples, num_classes). Sparse Categorical Cross-entropy and multi-hot categorical cross-entropy use the same equation and should have the same output. Categorical Cross Entropy:įollowing is the definition of cross-entropy when the number of classes is larger than 2. In this document, we will review how these losses are implemented. We added sparse categorical cross-entropy in Keras-MXNet v2.2.2 and a new multi-host categorical cross-entropy in v2.2.4. Before Keras-MXNet v2.2.2, we only support the former one. In Keras with TensorFlow backend support Categorical Cross-entropy, and a variant of it: Sparse Categorical Cross-entropy. It compares the predicted label and true label and calculates the loss. When doing multi-class classification, categorical cross entropy loss is used a lot.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed